This article explains the landmark paper Attention Is All You Need by Vaswani et al. (2017), which introduced the Transformer architecture that powers GPT, BERT, and nearly every modern language model.

Introduction

The dominant sequence transduction models are based on complex recurrent or convolutional neural networks that include an encoder and a decoder. The best performing models also connect the encoder and decoder through an attention mechanism.

The Transformer is a new architecture based solely on attention mechanisms, dispensing with recurrence and convolutions entirely. Experiments show these models to be superior in quality while being more parallelizable and requiring significantly less time to train.

The Sequential Bottleneck

Before the Transformer, the dominant approach to sequence tasks—machine translation, language modeling, text generation—was the recurrent neural network (RNN), particularly LSTMs and GRUs.

RNNs process sequences one token at a time. To compute the hidden state at position $t$, you need the hidden state at position $t-1$:

This creates two fundamental problems:

Lack of Parallelization

Because each step depends on the previous step, you cannot parallelize computation within a single sequence. Training is inherently sequential in time. For long sequences, this becomes a severe bottleneck.

Long-Range Dependencies

Information from early tokens must survive many sequential steps to influence later processing. Gradients must flow backward through all those steps. In practice, this makes learning long-range dependencies difficult, even with gating mechanisms like LSTM.

The answer is the Transformer.

Attention as a Lookup

The core idea of attention is surprisingly simple: it’s a soft lookup into a set of values, where the lookup key determines how much weight to give each value.

Think of it like a database query:

- You have a query (what you’re looking for)

- You have a set of keys (labels for stored items)

- You have a set of values (the stored items themselves)

The attention mechanism compares your query to each key, computes a relevance score, and returns a weighted combination of the values.

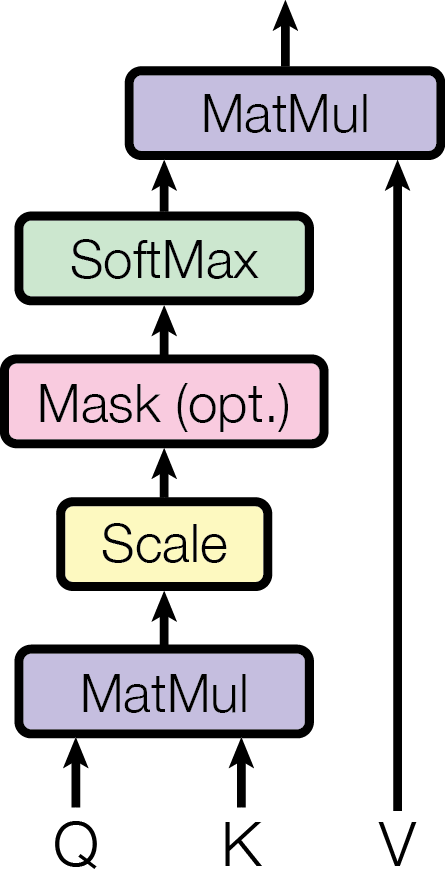

Scaled Dot-Product Attention

The Transformer uses a specific form of attention called Scaled Dot-Product Attention.

Given:

- Queries $Q \in \mathbb{R}^{n \times d_k}$ — what we’re looking for

- Keys $K \in \mathbb{R}^{m \times d_k}$ — what we’re looking in

- Values $V \in \mathbb{R}^{m \times d_v}$ — what we retrieve

The attention output is:

Step by step

-

Compute compatibility scores: $QK^T$ gives an $n \times m$ matrix of dot products. Entry $(i, j)$ measures how much query $i$ matches key $j$.

-

Scale: Divide by $\sqrt{d_k}$. Without scaling, large $d_k$ values push dot products into regions where softmax has very small gradients.

-

Normalize: Apply softmax row-wise. Each query now has a probability distribution over keys.

-

Retrieve: Multiply by $V$. Each output is a weighted combination of values.

Why scale?

For large $d_k$, the dot products $q \cdot k$ tend to have large magnitude (variance roughly $d_k$). This pushes softmax into saturated regions where gradients vanish. Scaling by $\sqrt{d_k}$ keeps the variance at 1.

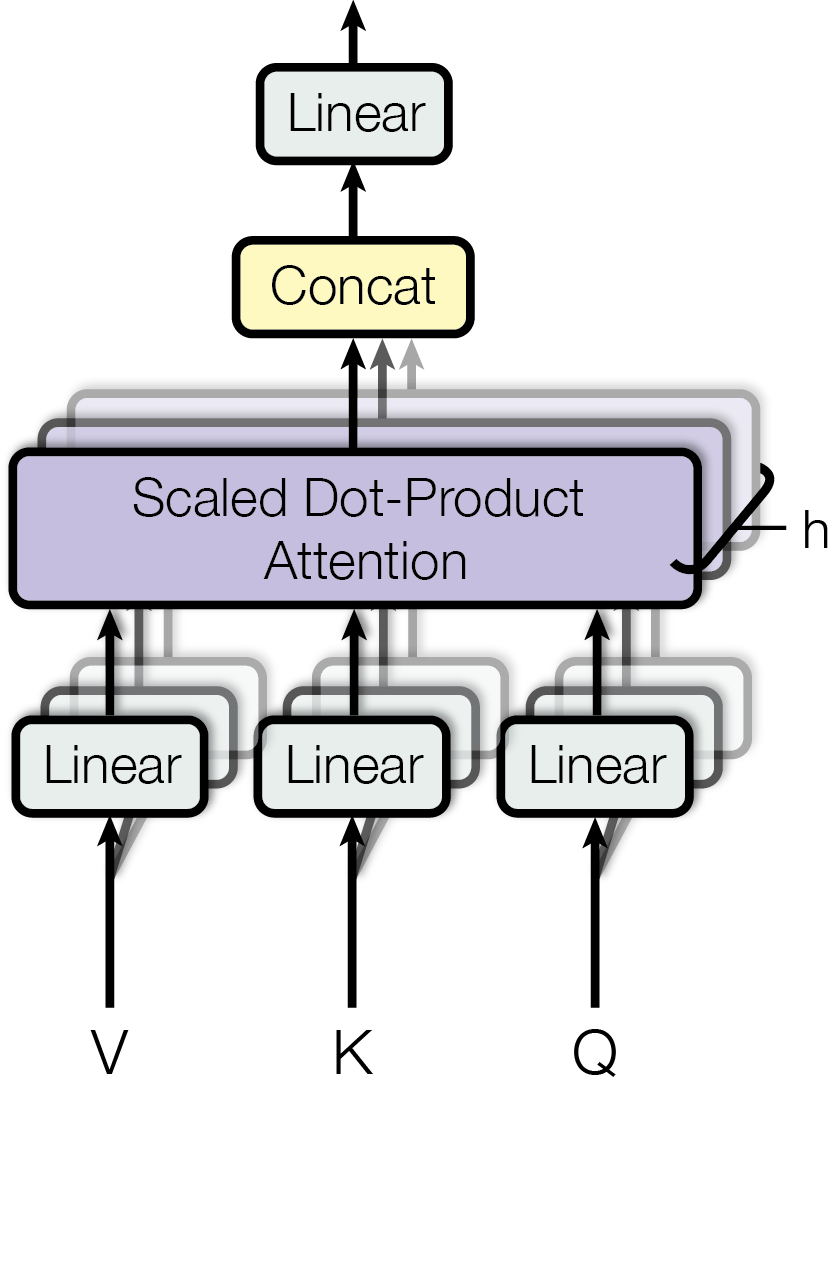

Multi-Head Attention

A single attention function can only focus on one type of relationship at a time. Multi-Head Attention runs multiple attention functions in parallel, each with its own learned projections.

$$\text{where } \text{head}_i = \text{Attention}(QW_i^Q, KW_i^K, VW_i^V)$$

Each head can learn to attend to different things:

- One head might focus on the previous word

- Another might focus on the subject of the sentence

- Another might focus on semantically similar words

The paper uses $h = 8$ heads with $d_k = d_v = 64$ (for $d_{\text{model}} = 512$).

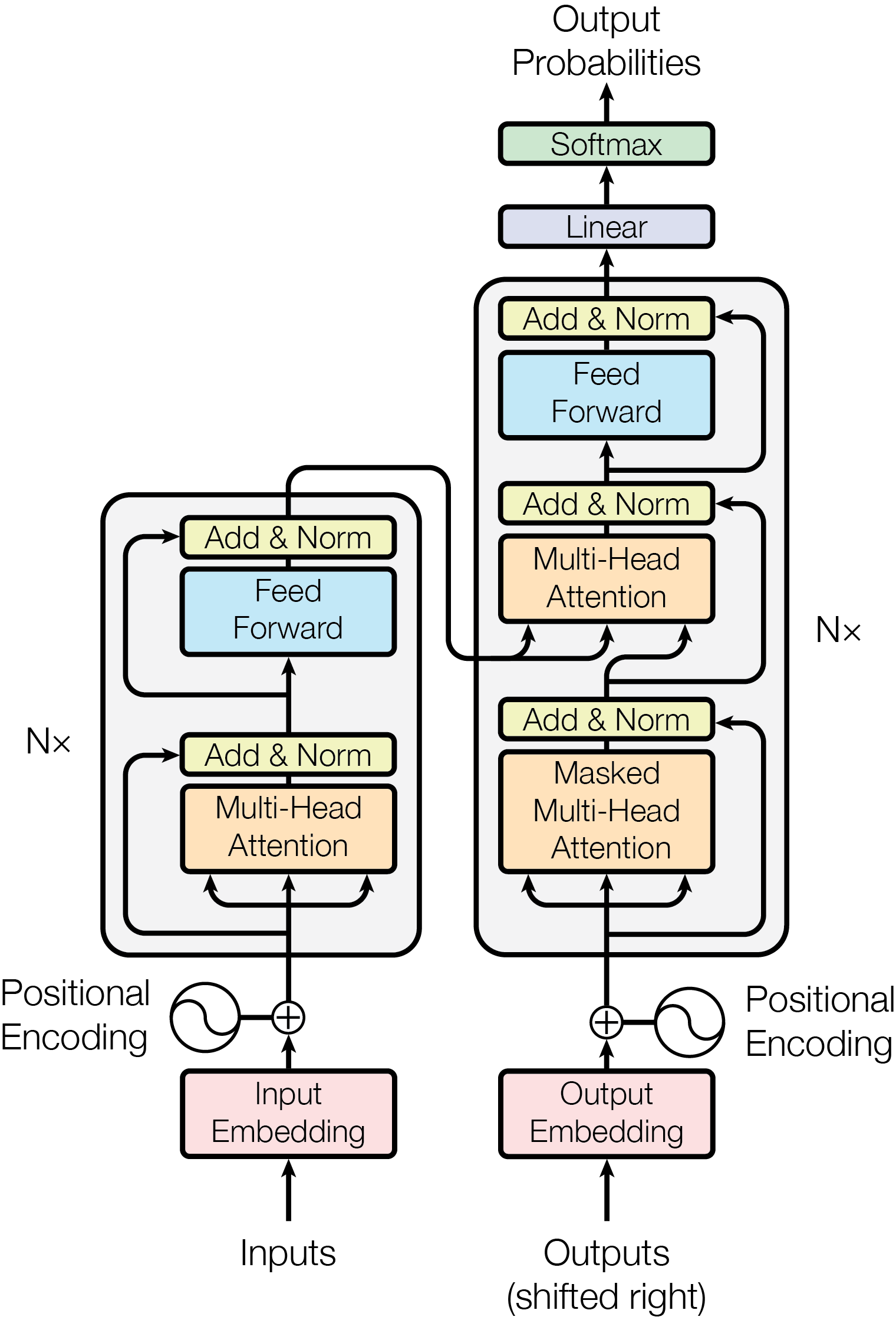

The Transformer Architecture

The Transformer follows the encoder-decoder structure, but built entirely from attention and feed-forward layers.

Self-Attention

Self-Attention

Cross-Attention

Encoder

Each encoder layer has two sub-layers:

- Multi-head self-attention: Every position attends to every position

- Feed-forward network: Applied independently to each position

Residual connections and layer normalization wrap each sub-layer.

Decoder

Each decoder layer has three sub-layers:

- Masked self-attention: Each position attends only to earlier positions

- Cross-attention: Queries from decoder; keys/values from encoder

- Feed-forward network: Same as encoder

Positional Encoding

Self-attention is permutation-equivariant—it has no notion of position. The Transformer adds positional encodings to the input embeddings.

For any fixed offset $k$, $PE_{pos+k}$ can be written as a linear function of $PE_{pos}$. This allows the model to learn to attend by relative position.

Why Self-Attention?

| Layer Type | Complexity | Sequential Ops | Max Path Length |

|---|---|---|---|

| Self-Attention | $O(n^2 \cdot d)$ | $O(1)$ | $O(1)$ |

| Recurrent | $O(n \cdot d^2)$ | $O(n)$ | $O(n)$ |

| Convolutional | $O(k \cdot n \cdot d^2)$ | $O(1)$ | $O(\log_k n)$ |

Self-attention connects all positions in $O(1)$ sequential operations, enabling full parallelization. It also provides a direct path between any two positions, making long-range dependencies easier to learn.

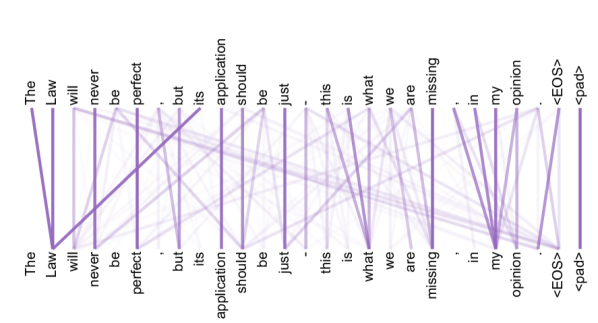

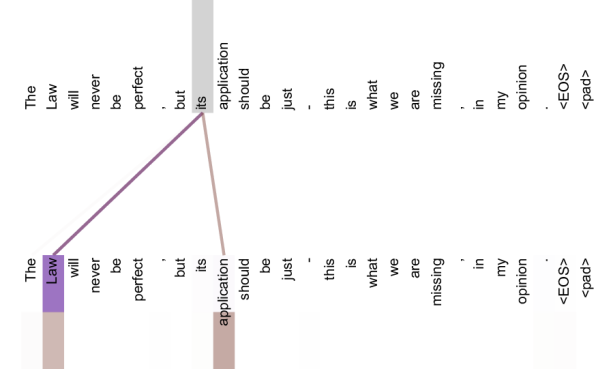

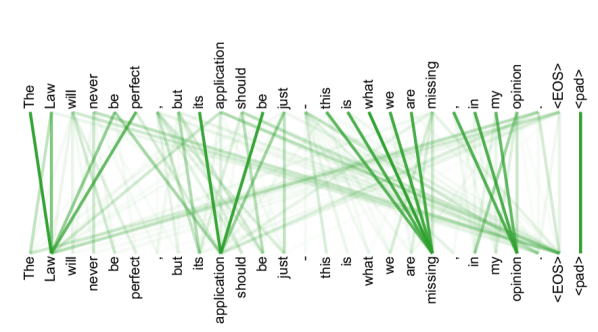

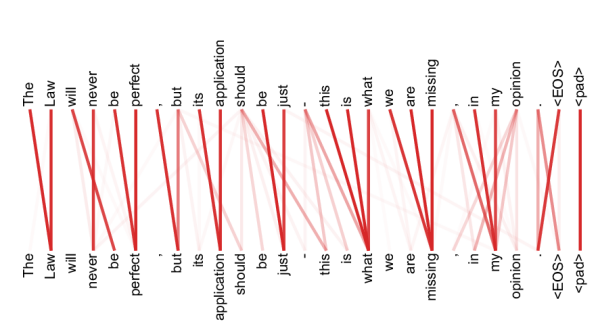

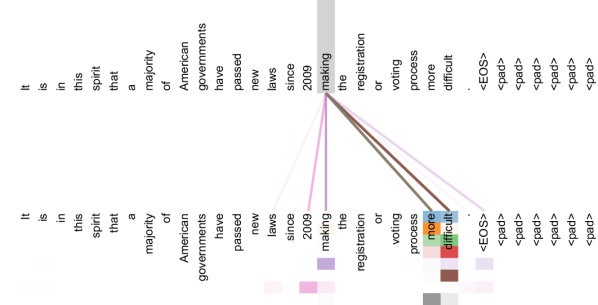

What Attention Heads Learn

The paper visualizes what individual attention heads learn in a trained Transformer. Different heads spontaneously specialize for different linguistic patterns—none of this structure is hard-coded.

Training and Results

Setup:

- Data: WMT 2014 English-German (4.5M pairs) and English-French (36M pairs)

- Hardware: 8 NVIDIA P100 GPUs

- Time: Base model 12 hours; Big model 3.5 days

| Model | EN-DE BLEU | EN-FR BLEU | Training Cost |

|---|---|---|---|

| Previous SOTA | 26.36 | 41.29 | $7.7 \times 10^{19}$ FLOPs |

| Transformer (big) | 28.4 | 41.8 | $2.3 \times 10^{19}$ FLOPs |

The Transformer achieves state-of-the-art results at a fraction of the training cost.

References

-

Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A. N., Kaiser, Ł., & Polosukhin, I. (2017). Attention Is All You Need. NeurIPS 2017.

-

Bahdanau, D., Cho, K., & Bengio, Y. (2014). Neural Machine Translation by Jointly Learning to Align and Translate. ICLR 2015.

-

Ba, J. L., Kiros, J. R., & Hinton, G. E. (2016). Layer Normalization.

Michael Wan Interactive Insights

Michael Wan Interactive Insights